In the macroscopic world, people, planets, and stars follow a set of physical laws to which all matter abides. However, what happens when such physical laws are observed on a microscopic particle scale? While modern circuitry strives for smaller silicon chips, this begs the question, how small can we go? As science experiments with the introduction of quantum computers, what are the next steps in scientific discovery using such a device?

History of Computers

Before tackling these questions, it is crucial to understand the fundamentals of computers and their transformative history. A problem arose at the end of the 20th century regarding how mental labor, such as decision-making, could be mechanized alongside the automatization of physical labor. Alan Turing, a prominent mathematician and computer scientist, developed the Turing machine through the use of logic and codified languages of zeros and ones.

The Turing machine is a theoretical computational device that can read and write a binary system of symbols, which are stored in an infinitely long memory tape. If given enough time and memory, the machine could hypothetically solve any computation through a set of rules and states, which tell the machine when to change a value, move to the next value, or halt.1 Although the general concept of observing and transforming the binary code is simple, it has become the foundation of modern digital computers.

The next stage in understanding computers is the incorporation of electricity and circuitry. By deconstructing a Turing machine, its computational abilities can be separated into three components: the main memory, the arithmetic logic unit (ALU), and the control unit (CU). Digital computers, also known as classical computers, use this blueprint to operate in the same way, only this time with electricity. A computer chip operates through a series of modules, logic gates, and transistors. As the basic unit of data processing, transistors can be seen as a switch that open or block the flow of information.

After the introduction of digitized information, hardware manufacturing began to see a growth in efficiency, but also a shrinkage in the size of transistors. In 1965, Gordon Moore, an engineer and co-founder of a computer hardware manufacturing company, postulated that the number of transistors within a microchip would double every two years. This meant that if the number of transistors increased as the size of the microchips decreased, the size of a singular transistor would also need to decrease to accommodate the change in proportions.2 However, if the size of transistors were to shrink to the size of an atom, the physical laws that govern the instrumental properties of a circuit become quantum mechanical.3

This quantum mechanical feature of atoms can be simplified in the basic understanding of Heisenberg’s Indeterminacy Principle, also known as the Uncertainty Principle. This principle states that the real-time position and speed of an electron cannot be accurately predicted with absolute certainty; only when the electron is measured can scientists find the space in which that electron appears at a specific time.4 The random spontaneity of electron movement contradicts the common misconception that electrons follow an orbital path around the nucleus.

To combat the uncertainty in atomic-sized circuitry, Richard P. Feynman, a distinguished theoretical physicist, introduced an abstract model that described a machine that can simulate quantum physics through a controllable quantum environment: the quantum computer.5 With a device able to exploit the quantum properties of atoms, scientists will be able to explore uncharted territories in encryption, simulation, astrophysics, material sciences, drug creation, and so much more. However, before delving into a quantum computer’s potential uses, it is important to understand what makes a quantum computer so powerful.

Classical vs Quantum

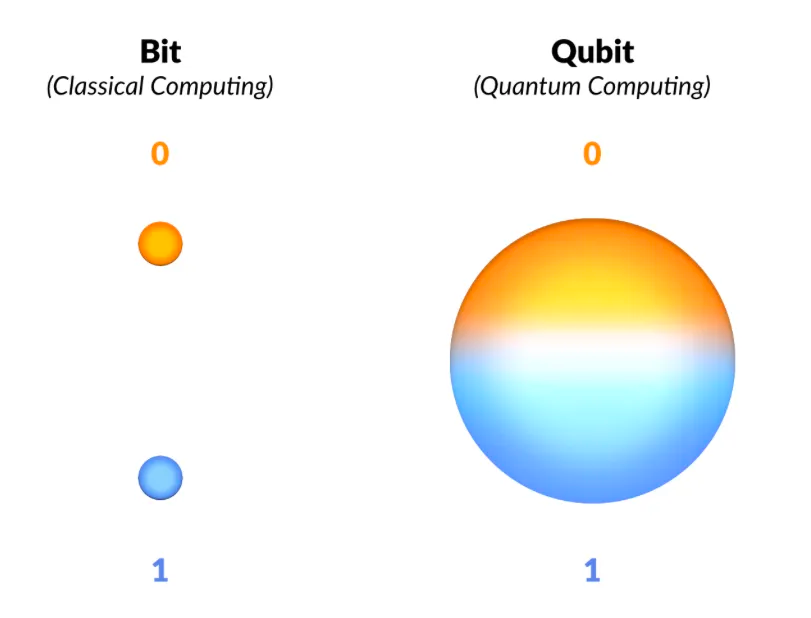

Within a classical computer, transistors process a string of bits that are represented by a binary system of either a zero or a one. Bits are the building blocks of data within a computer and are able to translate computer speech into words, colors, and numbers.

A famous thought experiment that has become ubiquitously known is Schrodinger’s Cat. In the experiment, if you place a cat in a box with a small piece of radioactive substance, the decay will trigger the release of radioactive poisoning that kills the cat. However, without any observation or measure of the cat’s present state, the cat exists in a state of being both dead and alive simultaneously to the outside world. Similarly, the state of a qubit, the basic unit of information in a quantum computer, can also exist as both a zero and a one. The ability to exist in a state of both zero and one is referred to as superposition.

Another analogy to further understand how a qubit function is by imagining a spinning top. This spinning top can spin up or down, up representing the excited state of one and down representing the ground state of zero, as in a classical bit. For a qubit, however, this spinning top can now spin up, down, and in all directions simultaneously.6

Apart from the properties of superposition, a qubit can also have the quantum mechanical property of entanglement. Entanglement can best be visualized by imagining an ion passing through a crystal which releases a pair of photons (particles of light that contain energy). Since the two photons were created at the same moment in spacetime, they will become entangled and therefore be correlated no matter how far apart they are. Upon detection, the entangled partner should show a correlated polarization as one of the photons occupies a particular direction of polarization (the geometrical orientation of an oscillating wave), the entangled partner, upon detection.7 The type of combinations between polarizations depends on how different experiments are designed, but the two photons will always show a correlation to one another.

Within a quantum computer, transistors are replaced by quantum gates, which entangle the qubits to each other. Rather than rely on correlations between items like a classical computer, a quantum computer’s system of quantum gates and entanglement allows the algorithm to read out the final state. As more qubits are entangled together, the computational capacity multiplies exponentially.

In order to maintain the entanglement, quantum computers are kept at temperatures near absolute zero. This allows the quantum system to be isolated from its external environment, preventing decoherence and maintaining the entangled states for longer periods of time. Decoherence occurs when electrons vibrate at different frequencies, creating noise that disrupts the entangled state. This can happen due to thermal noise, electromagnetic radiation, and other external or environmental factors.8

Real-world Applications

One common misconception that may arise is the idea that a quantum computer is faster than a classical computer. In reality, a quantum computer is not any faster at calculating problems, such as simple arithmetic, than a standard digitized device such as a calculator, phone, or computer.9

Instead, quantum computers are more useful in processing and configuring combinations within large pools and data. With this capacity to work through sets of information, quantum computing can be used to solve pertinent problems such as solid-state physics and the creation of new materials.10 For example, translating chemical structures and energy models can be accomplished through quantum annealing. This is a process by which quantum computers are able to find the most optimal solution from a larger set of solutions by taking advantage of the computer’s properties such as entanglement and superposition. Through this method, material scientists can develop new mediums for batteries, industrial nitrogen-fixing, or catalysis.10

Quantum computers are also highly expected to play a role in encryption and decryption systems. While cracking encryption methods would normally take years, quantum computers would theoretically be able to achieve it in days. With this exponentially enhanced speed, it could create a serious risk in the future of any asymmetric or symmetric encryption.11

Lastly, One of the most anticipated aspects in which quantum computers could be used is simulations. This includes simulations for branches of science such as astronomy, aerodynamics, nature, chemical compositions, and more. To be able to model natural phenomena in a programmable fashion opens the door to more insight into physical problems. Scientists will be able to get around certain limitations regarding hardware and understand the properties of these variations of systems.

References

- https://www.cl.cam.ac.uk/projects/raspberrypi/tutorials/turing-machine/one.html

- https://www.investopedia.com/terms/m/mooreslaw.asp

- Hagar, Amit and Michael Cuffaro (2022). Quantum Computing. The Stanford Encyclopedia of Philosophy. Retrieved October 9, 2023, from https://plato.stanford.edu/archives/fall2022/entries/qt-quantcomp

- (n.d.). What Is the Uncertainty Principle and Why Is It Important? Caltech Science Exchange. Retrieved Nov 3, 2023, from https://scienceexchange.caltech.edu/topics/quantum-science-explained/uncertainty-principle#:~:text=Formulated%20by%20the%20German%20physicist,about%20its%20speed%20and%20vice

- Rusty Flint (2023). Richard Feynman and his contributions to Quantum Computing and Nanotechnology. Quantum Zeitgeist. Retrieved October 9, 2023, from https://quantumzeitgeist.com/richard-feynman-and-his-contributions-to-quantum-computing-and-nanotechnology/

- Star Talk (2023). How the Quantum Computer Revolution Will Change Everything with Michio Kaku & Neil deGrasse Tyson [Video]. Youtube. Retrieved October 9, 2023, from https://www.youtube.com/watch?v=IfvoBlDUrPU&t=1872s

- Alexis Shasta (2019). Observer. Quantum Physics Lady Encyclopedia of Quantum Physics. Retrieved October 9, 2023, from https://quantumphysicslady.org/glossary/observer/#:~:text=In%20quantum%20mechanics%2C%20an%20observer,of%20measurement%20in%20quantum%20mechanics.

- Madali Nabil (2023). Entanglement and its role in quantum computing. Medium. Retrieved October 14, 2023, from https://medium.com/@madali.nabil97/entanglement-and-its-role-in-quantum-computing-2cbb1ff74e77

- Cleo Abram (2023). Quantum Computers, explained with MKBHD [Video]. Retrieved October 9, 2023, from https://www.youtube.com/watch?v=e3fz3dqhN44

- Daley, A.J., Bloch, I., Kokail, C. et al. (2022). Practical quantum advantage in quantum simulation. Retrieved October 9, 2023, from https://www.nature.com/articles/s41586-022-04940-6

- Ryan Arel (2023). Explore the impact of quantum computing on cryptography. Retrieved, Nov 3, 2023, from https://www.techtarget.com/searchdatacenter/feature/Explore-the-impact-of-quantum-computing-on-cryptography#:~:text=Quantum%20computing%20could%20impact%20encryption’s,in%20days%20with%20quantum%20computers.

Image References

Banner Image https://www.wired.com/story/wired-guide-to-quantum-computing/

Figure 1: https://en.wikipedia.org/wiki/Turing_machine#/media/File:Turing_Machine_Model_Davey_2012.jpg

Figure 2: Karel D. (2019) The computational power of Quantum Computers: an intuitive guide. Retrieved October 9, 2023, from https://medium.com/@kareldumon/the-computational-power-of-quantum-computers-an-intuitive-guide-9f788d1492b6

Figure 3: Britannica, T. Editors of Encyclopaedia (2023, September 15). polarization. Encyclopedia Britannica. Retrieved October 14, 2023, from https://www.britannica.com/science/polarization-physics